In the Indian banking sector, the mobile app is no longer just a convenience layer, it is the primary channel through which a majority of customers interact with their bank. According to RBI data, UPI alone processes over 13.5 billion transactions per month, with year-on-year growth of 35%. At this scale, a slow or unstable banking application is not a UX problem, it is a revenue, compliance, and reputational risk.

Yet many institutions continue to operate applications that were engineered for a fraction of today’s transaction volumes. The warning signs are rarely dramatic; they accumulate quietly until a peak-load event or a regulatory audit forces the issue into the open.

Below are 10 measurable, data-backed signs that your banking app requires a performance engineering overhaul and a clear direction on what to do about each.

Sign 1: Transaction Response Times Consistently Exceed 3 Seconds

Industry benchmarks from Google’s research on mobile performance indicate that 53% of users abandon a mobile session if a page takes longer than 3 seconds to load. In banking, the threshold is even less forgiving, a delayed fund transfer confirmation is perceived not as lag, but as a failed transaction. If your app’s average transaction response time (TRT) sits above 3 seconds under normal load, the architecture has a performance debt that needs to be addressed.

Fix: Conduct a baseline application performance engineering assessment to identify bottlenecks at the API, database query, and network layers. Implement response time SLAs at the service level, not just the UI layer.

Do You Also Struggling With Your Banking App’s Performance?

Identify performance gaps before they impact transactions, compliance, and customer trust.

Sign 2: Your App Has Never Been Load-Tested to Realistic Peak Volumes

Most banking apps are stress-tested at some point during initial development. However, if load testing has not been revisited since the last major release or since the user base doubled, those test results are no longer representative. Salary credits, GST due dates, and IPO application windows routinely generate traffic spikes 8 to 12 times the daily average. An app that has not been validated against those volumes is an app that is waiting to fail.

Fix: Implement a continuous performance testing and engineering programme that models real-world concurrency patterns, including peak-hour salary disbursement events and month-end transaction surges.

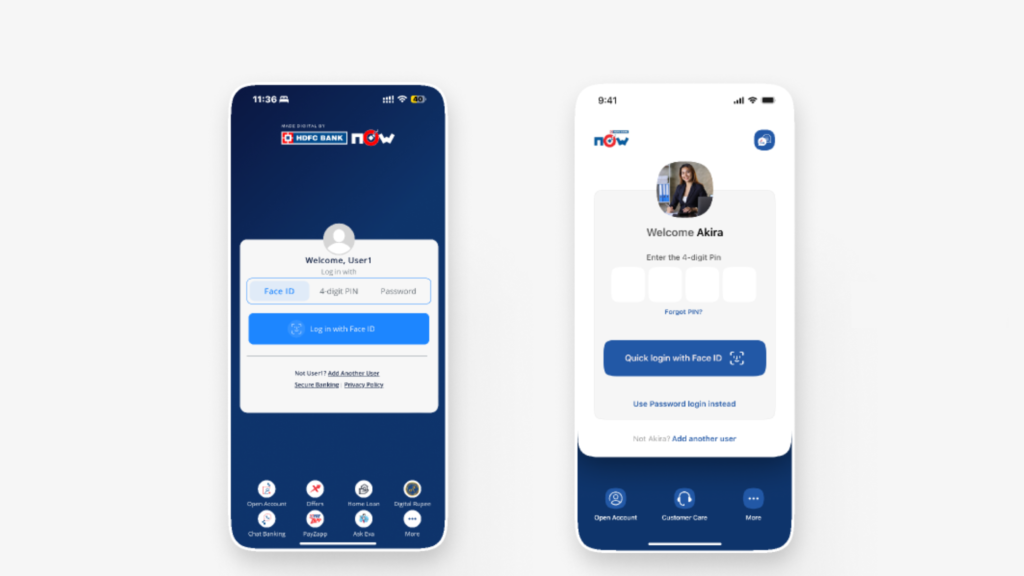

Sign 3: Users Report Frequent Login Failures During Peak Hours

Login failures under peak load are a symptom of session management and authentication infrastructure that has not been scaled in line with the user base. This is particularly relevant after RBI’s Digital Banking Channels Authorisation Directions, 2025 (effective January 1, 2026), which require banks to demonstrate robust onboarding and real-time alert delivery as a condition of authorisation. A login that fails at peak hours is a direct regulatory risk.

Fix: Stress-test authentication flows independently from transactional flows. Implement circuit-breaker patterns at the identity layer and evaluate site reliability engineering practices to ensure authentication services maintain uptime independent of other application components.

Sign 4: Post-Release, Production Incidents Spike Regularly

If every new release is followed by a wave of production incidents, performance regression testing is either absent or insufficiently automated. Research from Splunk and Oxford Economics indicates that financial services firms lose an average of $152 million annually from system downtime, with direct revenue impact accounting for approximately $37 million of that figure. Recurring post-release incidents are not development failures — they are a process failure in quality assurance.

Fix: Establish a Quality Digital Assurance Centre of Excellence that integrates performance regression gates into the CI/CD pipeline, preventing releases that degrade response time or error rate baselines.

Sign 5: Third-Party API Failures Cascade Across the Entire App

Modern banking apps integrate with credit bureaus, payment gateways, KYC providers, insurance aggregators, and NPCI systems. If a failure in one external API causes the entire application to degrade or become unresponsive, there is no fault isolation or fallback strategy in place. API downtime in the finance sector increased by 60% between Q1 2024 and Q1 2025, making this scenario more likely than ever.

Fix: Implement timeout, retry, and fallback patterns at every third-party integration point. Use application performance monitoring tools to instrument each integration endpoint individually, enabling rapid isolation when a downstream dependency degrades.

Sign 6: You Have No Visibility Into Real User Experience Across Devices and Networks

Lab-based testing tells you how your app performs under controlled conditions. It does not tell you how a customer on a 4G connection in a Tier 3 city experiences a net banking session. If your engineering team cannot answer questions about P95 response time by device type, network category, or geography, you are flying blind on actual customer experience.

Fix: Deploy mobile real user monitoring to capture live performance data from actual users across device and network segments. Complement this with synthetic monitoring to proactively simulate user journeys before issues reach production.

Sign 7: Your App Degrades Significantly on Older Android Versions or Low-End Devices

India has one of the world’s most fragmented device ecosystems. A significant proportion of banking app users operate on devices with 2GB RAM or less, running Android versions two or three generations behind current. If performance engineering has only targeted flagship devices, a large segment of your actual user base is underserved. This is not just a UX issue, it affects financial inclusion metrics that regulators monitor.

Fix: Expand functional testing and performance validation to cover a representative matrix of low-end devices and older OS versions. Set minimum performance thresholds for these segments, not just for current-generation hardware.

Sign 8: Monitoring Alerts Are Either Non-Existent or Ignored

Many banks have monitoring tools deployed but no structured alerting or escalation protocols. Alert fatigue caused by poorly configured thresholds, leads teams to tune out notifications. When a genuine degradation occurs, it is customers who report it first, not the operations team. This is a sign that observability is treated as an infrastructure checkbox rather than an operational discipline.

Fix: Implement structured observability with tiered alerting: anomaly detection for early warning, threshold alerts for SLA breach, and escalation workflows with defined response time expectations by severity. Review and tune alert configurations quarterly.

Sign 9: Application Performance Has Never Been Validated After a Cloud Migration

Cloud migrations are often treated as infrastructure exercises. Performance validation is treated as a post-migration activity that gets deprioritised once the cutover is complete. However, network latency patterns, database connection pool behaviour, and auto-scaling lag in cloud environments can introduce performance regressions that do not appear in functional testing.

Fix: Build performance validation into your application migration assurance process. Define pre-migration performance baselines and run equivalent load tests against the cloud environment before live traffic is switched.

Sign 10: Customer Complaints About App Slowness Are Rising Quarter-on-Quarter

Customer complaints are a lagging indicator, but a reliable one. If app performance-related complaints have increased over two or more consecutive quarters, the problem is structural, not a one-off incident. Given that 80% of Gen Z banking customers cite mobile app quality as their primary criterion for choosing a bank, rising complaints translate directly to attrition risk.

Fix: Map complaint themes to specific user journeys using digital experience monitoring data. Quantify the revenue impact of each degraded journey and build a prioritised remediation backlog with measurable SLA targets for each.

Performance Engineering Maturity: A Quick Benchmark

| Maturity Level | Characteristics | Risk Exposure |

| Level 1 – Ad Hoc | No formal testing; issues found in production | High – outages, regulatory breaches |

| Level 2 – Reactive | Testing done pre-release only; no continuous monitoring | Medium-High – regression risks |

| Level 3 – Defined | Scripted load tests, basic monitoring, manual reviews | Medium – gaps in real-user data |

| Level 4 – Managed | Continuous testing, RUM, synthetic monitoring, SLAs defined | Low-Medium – reactive to anomalies |

| Level 5 – Optimised | Full observability, APM integrated into SDLC, auto-remediation | Low – proactive and predictive |

The Business Case for Acting Now

With RBI’s Digital Banking Channels Authorisation Directions now in force from January 2026, banks that experience frequent outages and high fraud rates face stricter enforcement and restricted authorisation to offer digital services. The regulatory landscape has moved from guidance to governance, performance is now a compliance requirement, not just a competitive differentiator.

Avekshaa Technologies P.A.S.S. Assurance Platform is purpose-built to help banking and financial services institutions diagnose performance gaps, run production-equivalent load simulations, and establish continuous assurance pipelines. Whether you are managing legacy infrastructure, a cloud-native stack, or a hybrid environment, the starting point is always the same: measure what is happening today before designing what should happen tomorrow.